18/02/2026 10:11 AM - One-on-one

Visual

Update

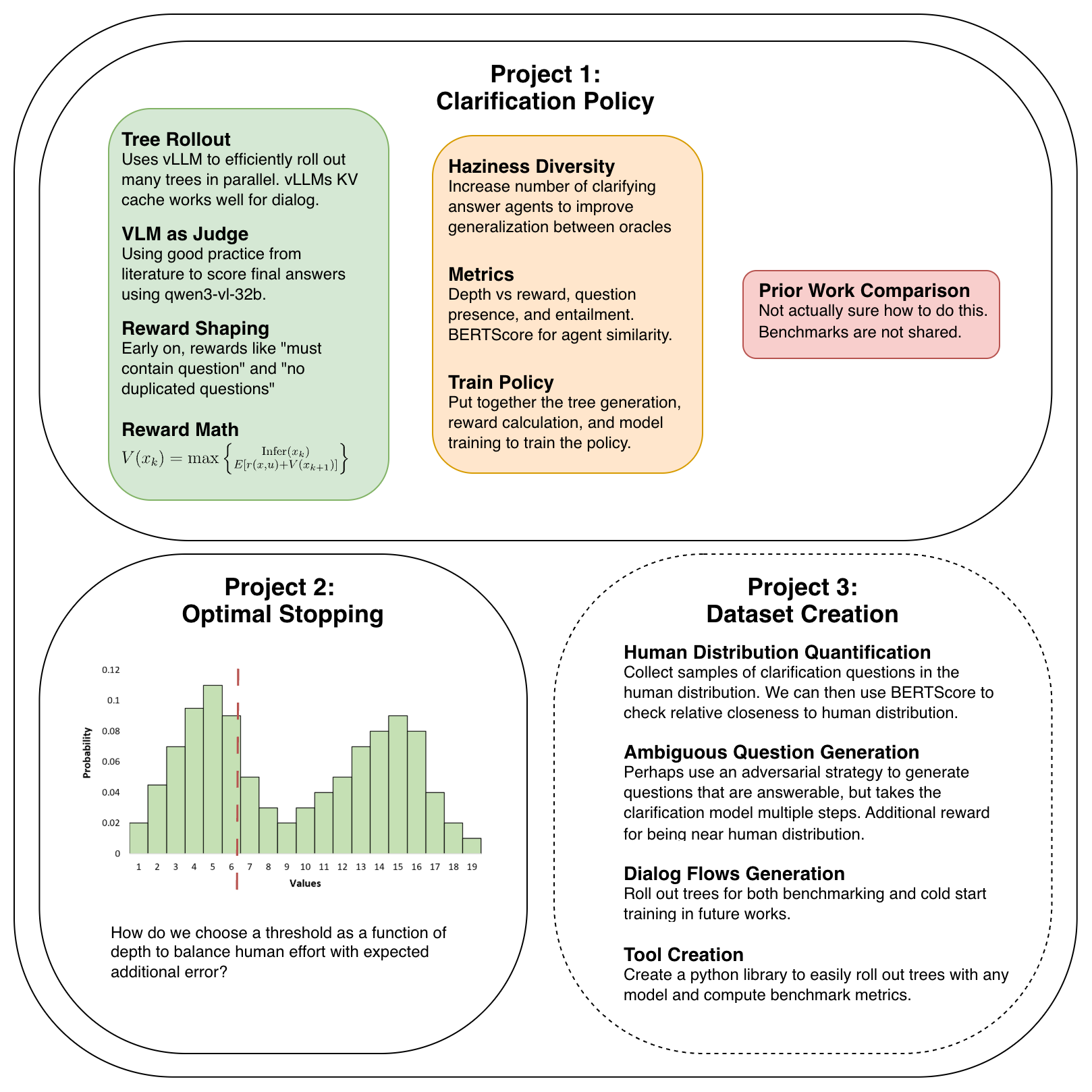

General Update:

Game rollout working

Computing my initial reward function working

* LLM-as-judge from this survey survey as the primary reward. This is different from past works that used an LLM to judge the quality of the clarifying question. Perhaps there is a good reason they did that though. Noisy reward?

* Reward shaping to address common failure modes to ensure inputs to the judge are valid. We assume that within a couple steps of RL we will see these failure modes entirely vanish as they are easy to prevent since they are just style.

* Question presence and length. If a question is not present, model is punished. This is to address the failure mode where the model answers the question instead of further clarifying. This is due to the fact that the model is only trained on single step dialogs so is not yet capable of multi-step dialogs.

* Entailment to prevent asking the same questions multiple times. A common failure mode accross all LLMs is seeing something in the conversation and copying it to the current output. This is common in our model where there is a very obvious clarifying question in which case the model fixates on that and fails to clarify other parts of the ambiguity.

* Game formulation

* Extends GRPO to a tree structured game with optimal stopping

* Optimal stopping:

* This gives us our Bellman equation. $V(x_k) = \max{{\text{Infer}(x_k), E[r(x, u) + V(x_{k+1})] } }$.

* When we hit max depth there is no futher question so we just

* We can also add a discount factor incentivize asking riskier questions to try to get the infer reward now. Currently there is no incentive to asking a risky question because as long as you can eventually get the answer right before hitting max depth you get full reward.

* Basically what we are saying is that we have a perfect infer/defer criterion. If our additional error for inferring now is above 0, we defer. If it is 0 or negative, we infer. This is unrealistic and could be replaced down the line by a model that is learning at the same time to predict AE which would reduce the tendancy to ask overconfident questions.

* Compute relative importance of each decision by computing advantage like we do in GRPO.

* GRPO introduced two main improvements

* Remove the critic in PPO by choosing the baseline to be average value of the Q values leading from the state instead of a learned value function. We use this same strategy.

* Normalize reward by the std of the group. This allows a more dense reward signal than a simple ranking advantage. It preserves the relative reward so if there is something like a bimodal distribution we do not lose that. We do something similar, which differentiates from methods like TPO, where we normalize with the std of all rewards in the tree. That makes advantage reflective of the importance of the question to getting the right answer.

$$

V(x_k) = \max{ \left\lbrace \substack{\text{Infer}(x_k)\ \ E[r(x, u) + V(x_{k+1})]} \right.}

$$

Upcoming Goals

Improve prompts for generating answers and inference.

Better model haziness

Construct a few different answer models to explore how different haziness effects performance. We also should use something like a leave-one-out strategy to check generalization of clarification ability from one answer model to another. Currently my answer model will often give away answers to I think another reasonable answer model would be a yes-no model. That always gives the minimal amount of information, but does not provide answers in the same way a human would. But perhaps until we have better human data it's the best we can do.

Create metrics.

Difference between agent measure using expected entailment.

* Want to measure the probability to two agents produce outputs that contain the same information. We can kind of do this using BERTScore which "measures how much of the original, reference text's meaning is captured by the generated text". So in our case we compute the entailment of agent 2's statements to anything in agent 1's samples.

* The problem with this is it only allows us to get the relative similarity and is not a good absolute measure. Only as $n \to \infty$ does it converge to a single value which is problematic if we are using this to measure similarity between human clarifications and agent clarifications. Although as long as we release a dataset of clarifications and define the measure, the benchmark holds fine. BERTScore is widely accepted as a good metric for generation quality, but I do think a modification to use entailment would make sense for our context.

Reward metrics

* Question presence - Want to see this go to 0 quickly. I also expect to see 0 for depth 1 already, but a dropoff in score when we go to depth 2 and higher.

* Entailment - Want to see this go to 0 quickly. By definition 0 at depth 1, and already low on average for deeper, but still needs to be supressed.

* Inference score - Want to see this improve slowly over iterations as the model learns to ask better questions.

Train the clarification policy

Everything is now in place for this. We can compute trees, rewards, and advantage. RL is always unstable though so I have no idea how long it will take to get the policy training well.

Extended Goals

Topic 1: Clarification Policy

Currently working on this. After training my own clarification policy I need to set up comparisons with prior works. And then it is paper ready.

Topic 2: Optimal Stopping

Extend the infer/defer decision to our continuous domain. This will involve creating a model capable of predicting the distribution of additional error we expect to have so that we can find the probability of causing and error if we infer now.

This allows us to construct the error volume stephan was researching to make the decision of whether to infer or defer.

Topic 3: Dataset

Take the experience gained from the previous topics to construct a dataset of ambiguous VQA.

Construct ambiguous questions. Perhaps we use an adversarial method that trains the question generation policy to get the CQ model to have high depth when it infers correctly. Additionally perhaps use the agent similarity score to get the model to produce outputs near the distribution of human ambiguous questions.

Collect CQs and CAs from real humans. Need multiple with the same parent to be able to define the distribution. Intention is to use these both for evaluation and for training models to ask questions and provide answers like real humans.

Meeting Discussion

Sequential testing

Leo Braiman

Don Geman

- [ ] @TODO Read papers on sequential testing. It relates to the work I am doing now.