25/02/2026 1:23 PM - One-on-one

Visual

Update

This was a low time week. Experiments have been running on lgn9 which has been very inconvenient since I just need to do implementation and testing has been difficult to do.

Discussed with my RL prof about the validity of the math. No complaints from him. He sees it as a reasonable generalization of GRPO to multi-step dialogs interacting with another agent. He was curious where it goes.

Doing the implementation work now:

- Getting the probabilities I need to implement the GRPO loss ($\pi_{old}$, $\pi_{ref}$, $\pi_{\theta}$).

- Creating a new training script that can cycle back and forth between training the policy and rolling out new trees. We will have to see how long it takes to converge before we decide whether it is reasonable to do a leave-one-out strategy to check generalization to different types of haziness.

Heading back to boston again next week. I will be around for meetings again.

Research overlap:

- Semantic video compression for audrey

- predicting prediction error for colin

Upcoming Goals

Better model haziness

Construct a few different answer models to explore how different haziness effects performance. We also should use something like a leave-one-out strategy to check generalization of clarification ability from one answer model to another. Currently my answer model will often give away answers to I think another reasonable answer model would be a yes-no model. That always gives the minimal amount of information, but does not provide answers in the same way a human would. But perhaps until we have better human data it's the best we can do.

Create metrics.

Difference between agent measure using expected entailment.

* Want to measure the probability to two agents produce outputs that contain the same information. We can kind of do this using BERTScore which "measures how much of the original, reference text's meaning is captured by the generated text". So in our case we compute the entailment of agent 2's statements to anything in agent 1's samples.

* The problem with this is it only allows us to get the relative similarity and is not a good absolute measure. Only as $n \to \infty$ does it converge to a single value which is problematic if we are using this to measure similarity between human clarifications and agent clarifications. Although as long as we release a dataset of clarifications and define the measure, the benchmark holds fine. BERTScore is widely accepted as a good metric for generation quality, but I do think a modification to use entailment would make sense for our context.

Reward metrics

* Question presence - Want to see this go to 0 quickly. I also expect to see 0 for depth 1 already, but a dropoff in score when we go to depth 2 and higher.

* Entailment - Want to see this go to 0 quickly. By definition 0 at depth 1, and already low on average for deeper, but still needs to be supressed.

* Inference score - Want to see this improve slowly over iterations as the model learns to ask better questions.

Train the clarification policy

Most things are now in place for this. We can compute trees, rewards, and advantage. RL is always unstable though so I have no idea how long it will take to get the policy training well.

Still need the logprobs from the trajectory rollout which I have found a way to get.

Extended Goals

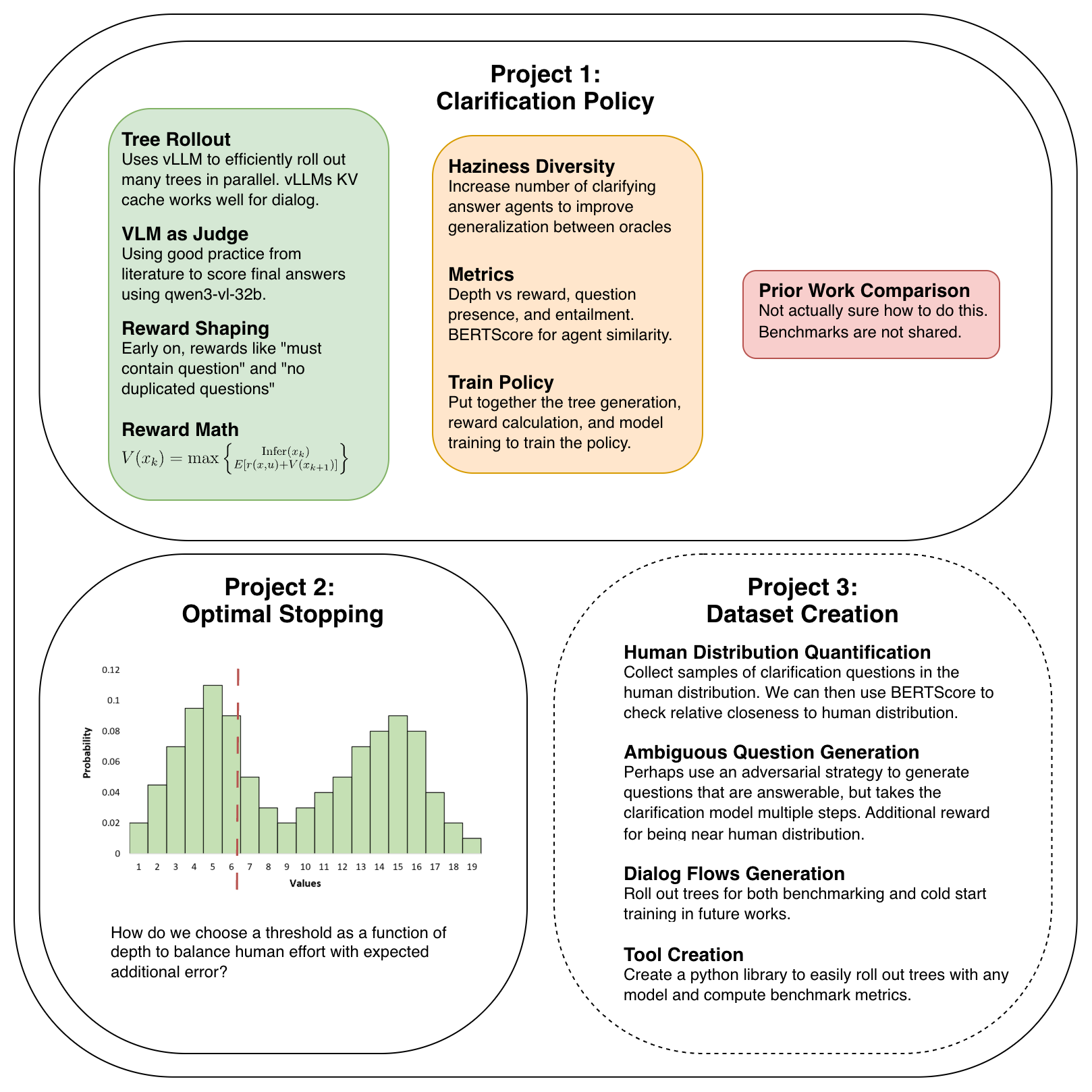

Topic 1: Clarification Policy

Currently working on this. After training my own clarification policy I need to set up comparisons with prior works. And then it is paper ready.

Topic 2: Optimal Stopping

Extend the infer/defer decision to our continuous domain. This will involve creating a model capable of predicting the distribution of additional error we expect to have so that we can find the probability of causing and error if we infer now.

This allows us to construct the error volume stephan was researching to make the decision of whether to infer or defer.

- We can look into either discretizing the error distribution or using something more complex like normalizing flows to make the hidden state of the neural network to a distribution of expected errors.

- Does cross entropy still work here if we discretize? Nearer bins are better than further ones now so it is not categorical. This must be a solved problem. It is probably just a different loss when you have a discretized distribution and you are learning from samples.

- Yea, we just use earth movers distance. It has a closed form solution in this 1D histogram case and seems to be the standard loss for learning discrete distributions.

- Ordinal regression loss is another option

- When we roll out the tree we get a few samples of the error coming from each branch so we can learn a much more dense signal. The question then actually becomes how do we decide what the probability of getting each inference leaf is when we don't have a decision rule yet because it depends on this model. We can pseudolabel with the current state of the model? That basically turns this into another RL problem. Would that training be stable?

Topic 3: Dataset

Take the experience gained from the previous topics to construct a dataset of ambiguous VQA.

Construct ambiguous questions. Perhaps we use an adversarial method that trains the question generation policy to get the CQ model to have high depth when it infers correctly. Additionally perhaps use the agent similarity score to get the model to produce outputs near the distribution of human ambiguous questions.

Collect CQs and CAs from real humans. Need multiple with the same parent to be able to define the distribution. Intention is to use these both for evaluation and for training models to ask questions and provide answers like real humans.

Meeting Discussion

Do any chess engines compute uncertainty at the end.

- [ ] @TODO How do we scale the dataset creation? Founder of duolingo has papers with insights into this.

- [ ] @TODO Respond to undergrad email about dataset creation.